What is Azure Databricks?

Azure Databricks is a data analytics platform that provides powerful computing capability, and the power comes from the Apache Spark cluster. In addition, Azure Databricks provides a collaborative platform for data engineers to share the clusters and workspaces, which yields higher productivity. Azure Databricks plays a major role in Azure Synapse, Data Lake, Azure Data Factory, etc., in the modern data warehouse architecture and integrates well with these resources.

Data engineers and data architects work together with data and develop the data pipeline for data ingestion with data processing. All data engineers work in a sandbox environment, and when they have verified the data ingestion process, the data pipeline is ready to be moved to Dev/Staging and Production.

Manually moving the data pipeline to staging/production environments via Azure portal will potentially introduce the difference in environments and add a tedious task to repeat manual processes in multiple environments. Automated deployment with service principal credentials is the only solution to move all your work to higher environments. There will be no privilege to configure via the Azure portal as a user. As data engineers complete the data pipeline, Cloud automation engineers will use IaC (Infrastructure as Code) to deploy all Azure resources and configure them via the automation pipeline. That includes all data related to Azure resources and Azure Databricks.

Data engineers work in Databricks with their user account, and it works very well integrating Azure Databricks with Azure key vault using key vault secret scope. All the secrets are persisted in key vault, and Databricks can get the secret value directly via linked service. Databricks uses user credentials to go against Keyvault to get the secret values. This does not work with service principal (SPN) access from Azure Databricks to the key vault. This functionality is requested but not yet there as per this GitHub issue.

JOIN OUR TEAM

Passionate about data? Check out our open data careers and apply to join our quickly growing team today!

Let’s Look at a Scenario

The data team has given automation engineers two requirements:

- Deploy an Azure Databricks, a cluster, a dbc archive file which contains multiple notebooks in a single compressed file (for more information on dbc file, read here), secret scope, and trigger a post-deployment script.

- Create a key vault secret scope local to Azure Databricks so the data ingestion process will have secret scope local to Databricks.

Azure Databricks is an Azure native resource, but any configurations within that workspace is not native to Azure. Azure Databricks can be deployed with Hashicorp Terraform code. For Databricks workspace-related artifacts, the Databricks provider needs to be added. For creating a cluster, use this implementation. If you are only uploading a single notebook file for creating a notebook, then use Terraform implementation like this. If not, there is an example below to use Databricks CLI to upload multiple notebook files as a single dbc archive file. The link to my GitHub repo for complete code is at the end of this blog post.

Terraform implementation

terraform {

required_providers {

azurerm = "~> 2.78.0"

azuread = "~> 1.6.0"

databricks = {

source = "databrickslabs/databricks"

version = "0.3.7"

}

}

backend "azurerm" {

resource_group_name = "tf_backend_rg"

storage_account_name = "tfbkndsapoc"

container_name = "tfstcont"

key = "data-pipe.tfstate"

}

}

provider "azurerm" {

features {}

}

provider "azuread" {

}

data "azurerm_client_config" "current" {

}

// Create Resource Group

resource "azurerm_resource_group" "rgroup" {

name = var.resource_group_name

location = var.location

}

// Create Databricks

resource "azurerm_databricks_workspace" "databricks" {

name = var.databricks_name

location = azurerm_resource_group.rgroup.location

resource_group_name = azurerm_resource_group.rgroup.name

sku = "premium"

}

// Databricks Provider

provider "databricks" {

azure_workspace_resource_id = azurerm_databricks_workspace.databricks.id

azure_client_id = var.client_id

azure_client_secret = var.client_secret

azure_tenant_id = var.tenant_id

}

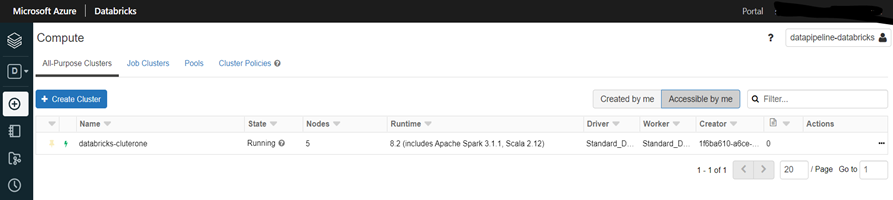

resource "databricks_cluster" "databricks_cluster" {

depends_on = [azurerm_databricks_workspace.databricks]

cluster_name = var.databricks_cluster_name

spark_version = "8.2.x-scala2.12"

node_type_id = "Standard_DS3_v2"

driver_node_type_id = "Standard_DS3_v2"

autotermination_minutes = 15

num_workers = 5

spark_env_vars = {

"PYSPARK_PYTHON" : "/databricks/python3/bin/python3"

}

spark_conf = {

"spark.databricks.cluster.profile" : "serverless",

"spark.databricks.repl.allowedLanguages": "sql,python,r"

}

custom_tags = {

"ResourceClass" = "Serverless"

}

}

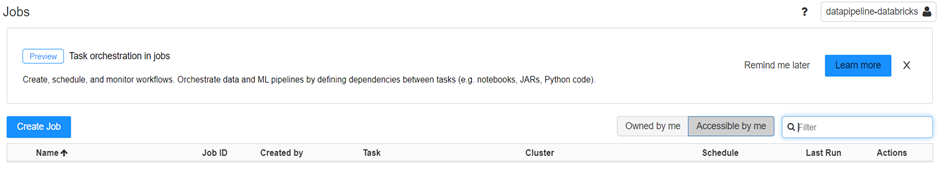

GitHub Actions workflow with Databricks CLI implementation

deploydatabricksartifacts:

needs: [terraform]

name: 'Databricks Artifacts Deployment'

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2.3.4

- name: Set up Python 3.0

uses: actions/setup-python@v2

with:

python-version: 3.0

- name: Install dependencies

run: |

python -m pip install --upgrade pip

- name: Download Databricks CLI

id: databricks_cli

shell: pwsh

run: |

pip install databricks-cli

pip install databricks-cli --upgrade

- name: Azure Login

uses: azure/login@v1

with:

creds: ${{ secrets.AZURE_CREDENTIALS }}

- name: Databricks management

id: api_call_databricks_manage

shell: bash

run: |

# Set DataBricks AAD token env

export DATABRICKS_AAD_TOKEN=$(curl -X GET -d "grant_type=client_credentials&client_id=${{ env.ARM_CLIENT_ID }}&resource=2ff814a6-3304-4ab8-85cb-cd0e6f879c1d&client_secret=${{ env.ARM_CLIENT_SECRET }}" https://login.microsoftonline.com/${{ env.ARM_TENANT_ID }}/oauth2/token | jq -r ".access_token")

# Log into Databricks with SPN

databricks_workspace_url="https://${{ steps.get_databricks_url.outputs.DATABRICKS_URL }}/?o=${{ steps.get_databricks_url.outputs.DATABRICKS_ID }}"

databricks configure --aad-token --host $databricks_workspace_url

# Check if workspace notebook already exists

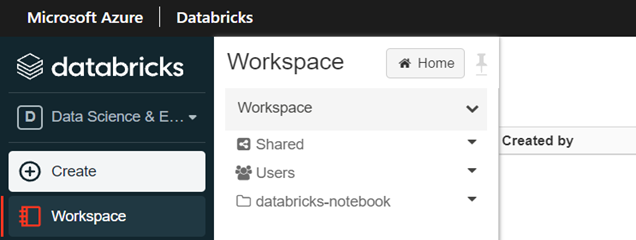

export DB_WKSP=$(databricks workspace ls /${{ env.TF_VAR_databricks_notebook_name }})

if [[ "$DB_WKSP" != *"RESOURCE_DOES_NOT_EXIST"* ]];

then

databricks workspace delete /${{ env.TF_VAR_databricks_notebook_name }} -r

fi

# Import DBC archive to Databricks Workspace

databricks workspace import Databricks/${{ env.databricks_dbc_name }} /${{ env.TF_VAR_databricks_notebook_name }} -f DBC -l PYTHON

While the above example shows how to leverage Databricks CLI to do automation operations within Databricks, Terraform also provides richer capabilities with Databricks providers. Here is an example of how to add ‘service principal’ to Databricks ‘admins’ group in workspace using Terraform. This is essential for Databricks API to work when connecting as a service principal.

Not just Terraform and Databricks CLI, but also Databricks API provides similar options to access Databricks artifacts and manage them. For example, to access the clusters in the Databricks:

- To access clusters, first, authenticate if you are a workspace user via automation or using service principal.

- If your service principal is already part of the workspaces admins group, use this API to get the clusters list.

- If the service principal (SPN) is not part of the workspace, use this API that uses access and management tokens.

- If you would rather add the service principal to Databricks admins workspace group, use this API (same as Terraform option above to add the SPN).

The secret scope in Databricks can be created using Terraform or using Databricks CLI or using Databricks API!

Databricks with other Azure resources have pretty good documentation, and for automating deployments, these options are essential: learn and use the best option that suits the needs!

Here is the link to my GitHub repo for complete code on using Terraform, Databricks CLI in GitHub Actions! In addition, you can find a bonus learning how to deploy synapse, ADLS, etc., as part of modern data warehouse deployment, which I will cover in my next blog post.

Until then, happy automating!