Welcome to part eight of the blog series based on Vishwas Lele’s PluralSight course: Applied Azure. Previously, we’ve discussed Azure Web Sites, Azure Worker Roles, Identity and Access with Azure Active Directory, Azure Service Bus and MongoDB, HIPPA Compliant Apps in Azure and Offloading SharePoint Customizations to Azure and “Big Data” with Windows Azure HDInsight.

Motivation

Big Compute refers to running large scale applications which utilize large amounts of CPU and/or memory resources. These resources are provided by using a cluster of computers and the applications are distributed across the cluster. The key concept is to distribute the application to run on multiple machines so as to execute computations simultaneously in parallel. Problems in the financial, scientific and engineering fields often require computations which would take several days or longer if executed on a single computer. Big Compute solutions significantly reduce the solution time dramatically from days to hours or less, depending on how many machines are added to the compute cluster. Big Compute differs subtly from “Big Data” in that the latter is more about using disk capacity and IO performance of a cluster of computers in order to analyze large volumes of data, whereas Big Compute is primarily about utilizing CPU power in a cluster to perform computations. In order to harness the resources of multiple machines, a Big Compute solution also requires components to handle the configuration and scheduling of the individual component computations – this is usually the role of a ‘head node’ in the compute cluster. Microsoft’s HPC (High Performance Computing) platform is a key aspect of their Big Compute offerings. HPC provides all the components necessary to configure, schedule and execute computations in a distributed cluster. Microsoft’s HPC solution is supported in on-premises environments as well as in the Azure cloud, both in an IaaS configuration as well as via an Azure HPC scheduler. Since the publishing of the Pluralsight course, there have been continued developments from Microsoft on the Big Compute offerings in Azure, in particular the new Azure Batch offering which is currently in preview mode.

Excel is heavily used as the tool of choice for computation; it is ubiquitous in the Financial Services industry and is also widely used in many others such as Health Care and Pharmaceuticals. Analysts are using Excel to compute large numbers of calculations, often resulting in the Excel workbook running for several hours or longer on a single computer. There is an increasing need to be able to reduce the computation times significantly, and this is where HPC Services for Excel comes in. The Excel workbook can be treated similarly to other types of applications computed in parallel on a distributed cluster. HPC is able to schedule the parallel execution of Excel workbooks (or parts of a computation used in a workbook) across multiple machines.

Scenarios

Typical scenarios for running Big Compute applications are financial modeling, risk analysis, rendering, media transcoding, image analysis, digital design and manufacturing and engineering applications such as fluid dynamics.

Key Mechanisms

Applications fall into two main categories, embarrassingly parallel applications and tightly coupled applications. The former is so-named because the overall problem can be split into computations which can be run independently acting on different sets of input data with the final result being a collation of the results of the independently executed computations. Monte Carlo simulations are a good example of embarrassingly parallel applications, where inputs to a model (the model could be an Excel workbook) are represented by a probability distribution, so the application can execute the workbook for each of the inputs obtained from the probability distribution and combine each result into the final probability distribution. Embarrassingly parallel computations scale well since the addition of computing nodes will continue to decrease the overall time of computation as there is no communication between tasks.

Tightly coupled applications have the characteristic where each individual computation requires interaction with other computations, such as the exchange of intermediate results. Compute resources are therefore needed for the interaction/communication between individual compute nodes. HPC provides support for a standard protocol for communication in parallel computing called MPI, the Message Passing Interface. The scalability of the computation may be affected by parallel slowdown – a communications bottleneck where, as compute node nodes are added each node spends more and more time on communication than on computation. An example of a tightly coupled computation is a car crash simulation where the events are highly interdependent.

Use of Excel in HPC compute clusters falls into the category of intrinsically parallel computation. Excel applications running in HPC can be broken up into two additional calculation types: running Excel workbooks on a cluster and running Excel UDFs on a cluster. An entire workbook may be executed on each compute node via a Service Oriented Architecture (SOA) approach, where HPC provides the necessary infrastructure to load and execute workbooks via WCF services. Alternatively, a single workbook executing on the client may offload user-defined functions (UDFs) that are implemented in XLL files on the cluster.

Core Concept

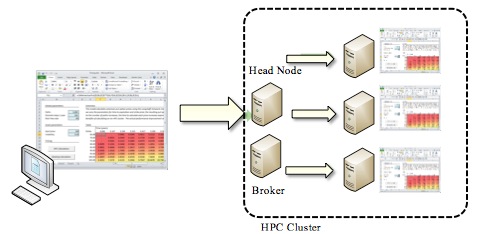

In the PluralSight course, Vishwas gives a demonstration of the use of HPC Services for Excel. The diagram below illustrates the core concept of the demo:

The left hand side of the diagram represents the client which initiates the computation. The client may be an Excel workbook itself, or it may be another application (such as a Windows app, or even a Web app running on a web server). The HPC compute cluster is represented on the right hand side of the diagram. The key components of the cluster as shown in the diagram are:

Compute nodes (the three machines on the far right of the diagram):

These are the individual machines which will be performing each computation of the Excel workbook. In this example, we are offloading an entire workbook to the cluster as opposed to just computations encased in Excel User Defined Functions. Therefore, the Excel program needs to be installed on each compute node. The code to load a workbook and perform the application specific operations on the workbook is contained in a WCF service instance installed on each node.

Head Node and Broker Node:

The head node is responsible for the cluster management and job scheduling. The client communicates with the head node to initiate a job. Once a job is scheduled and resources allocated by the head node, responsibility of communication between the client and the compute nodes is passed to the broker node. This is because our example is using the SOA method, where the computations are being executed by WCF service instances, and the broker node is responsible for handling the WCF communications.

Design Considerations

- Offload entire workbook vs. UDF

If you’re building an Excel based HPC application, the first key decision is whether to offload the entire workbook to the cluster or just the UDFs. It depends on the kind of application you are building. If the bulk of the computation logic can be encapsulated into XLL file UDFs, and the client to the cluster can be an Excel workbook itself, then the UDF option is a possibility. If the entire workbook needs to run on each compute instance, the SOA based design is required.

- Keeping compute nodes in sync

You have to ensure that the workbook instance and version is the same across all compute nodes in the cluster. This problem is exacerbated if you have a cluster that spans on-premises as well as Azure compute nodes. A synchronization strategy needs to be in place.

- Data intensive applications (Big Data)

HPC server offered a data intensive application type, but going forward Microsoft recommends using HDInsight (Microsoft’s Hadoop implementation) on the MS Azure platform.