AIS developed a prototype that highlights the features and capabilities of open standards for geospatial processing and real-time data sharing through web applications. If you haven’t already, please click here to read part one.

After getting the VIIRS data into our application using GeoServer, our next objective was to enhance the prototype to demonstrate some of the exciting things AIS is able to do through the use of various web technologies. Our goal was to provide a highly collaborative environment where clients on a variety of devices could all interact in real time with map data.

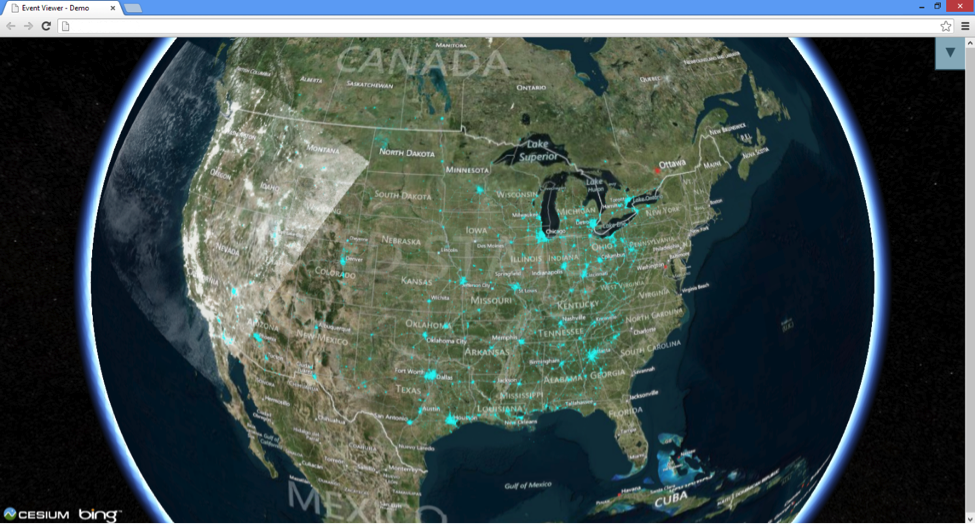

The first thing we did was add support for a 3D map view using the Cesium JavaScript library and the ability to switch back and forth between 2D and 3D map views. Figure 1 shows our application displaying WMS layers on a 3D map. Cesium natively supports WMS layers, so this piece of the implementation was straightforward. In order to support WFS layers we had to manually generate the WFS request to GeoServer and retrieve the data in GeoJson format. Cesium is then able to take this GeoJson data and render the appropriate features on the map.

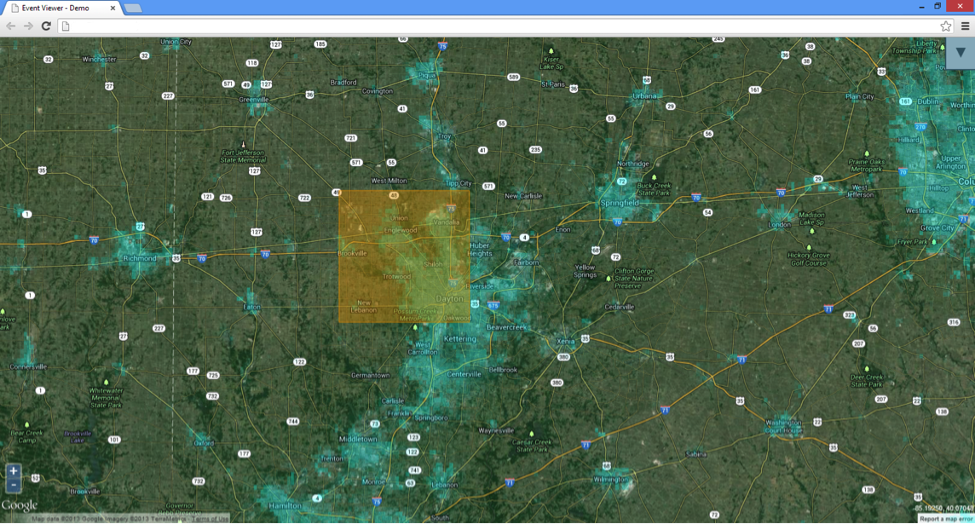

The next step was to allow users to draw annotations on the map. These annotations are polygons, drawn on top of map layers to highlight areas of interest on that particular layer. Figure 2 shows an annotation drawn on top of a WMS layer. When a user draws an annotation we use SignalR to push the annotation to all other users and draw it on their map in real time as well. Once the annotation is drawn users can add details and chat about it.

[pullquote]The challenge with drawing and displaying annotations was to make the experience seamless for all users regardless of the device they use and which map type they are currently viewing.[/pullquote]The challenge with drawing and displaying annotations was to make the experience seamless for all users regardless of the device they use and which map type they are currently viewing. So if a user draws an annotation on a phone using the 3D map, we need to draw that annotation in real time on the map of a tablet user viewing the 2D map. In order to do this we needed to store the map coordinates of the vertices of the polygon and then send this data to clients which they use to draw the polygon on their map. However, since the 2D and 3D maps do not use the same map coordinate systems the client has to translate the coordinates into the correct coordinate system before drawing the polygon.

The last objective of this phase of the prototype development was to add real-time screen sharing capabilities. This really took the collaborative aspect of the application to the next level. The goal was to allow a user to share information about his current application state with other connected users. We wanted to be able to share information such as what position on the map the user was currently viewing and what layers he had displayed. However, we didn’t want to just implement a complete screen sharing solution (such as Lync or GoToMeeting) because we wanted the clients to still be able to interact with what the host was showing them.

We once again enlisted SignalR to help with this task. When the host changes his position on the map or toggles the display of visible layers, we send this data to the clients and instruct them to update their map position and visible layers as well. As was the case with display annotations, having both 2D and 3D clients made this more challenging. In order to more accurately replicate the map position across 2D and 3D clients, we store the coordinates of the four corners of the map viewport — as well as the coordinates of the center of the map — while, once again, translating for differing coordinate systems. This allowed us to create a smooth, real-time map mirroring that translated well across various devices. Through our use of cutting-edge web technologies we were able to enhance our prototype to demonstrate a highly collaborative environment for sharing geospatial data. By developing a browser-based solution we are able to deliver this application across a wide variety of devices.

Click here to read more about AIS’ innovative work with custom web applications.