Containers are, for good reason, getting a lot of attention. For the cost of having to manage some complexity, they provide a unique level of flexibility, ability to scale, run software across cloud and on-premises environment…the list of benefits can go on and on. And usually when you hear about containers in the technical press, they’re included in an overarching story about an organization that moved to some highly scalable, microservices-based architecture to meet their ridiculous capacity demands (Netflix, Google, etc.).

Containers are, for good reason, getting a lot of attention. For the cost of having to manage some complexity, they provide a unique level of flexibility, ability to scale, run software across cloud and on-premises environment…the list of benefits can go on and on. And usually when you hear about containers in the technical press, they’re included in an overarching story about an organization that moved to some highly scalable, microservices-based architecture to meet their ridiculous capacity demands (Netflix, Google, etc.).

At the most basic level, however, containers are about being able to streamline the process of installing and running software. In fact, the fundamental concepts behind containers map almost one-to-one with what’s been traditionally required to install a piece of software on your laptop:

- Container images are like “installers” (msi, exe, .zip packages)

- A container instance is like the software process running on your machine.

When you remove a container image, you remove the “installer”; remove the container and you uninstall the software.

The thing is though, with containers you no longer install that software directly on the host computer – containers are isolated sets of processes that run until you tell them not to, and then can be removed completely by simply stopping and removing the container. You pull in a container image from a repository (think a central location listing available software for download), start the container instance, and stop the container instance. In the time between when the container is started and stopped, a user has access to the application’s functionality. There is no need to perform a traditional install or uninstall.

But how is this “streamlining” accomplished? And what is in a container that allows it to streamline that process? The answer to that question is that containers provide the ability to package a software application’s set of unique processes, and then share non-unique (but still essential) processes that already exist on a host operating system. Containers take advantage of the fact that these non-unique processes are installed when a host operating system is installed, and can be shared with any other process running on that host.

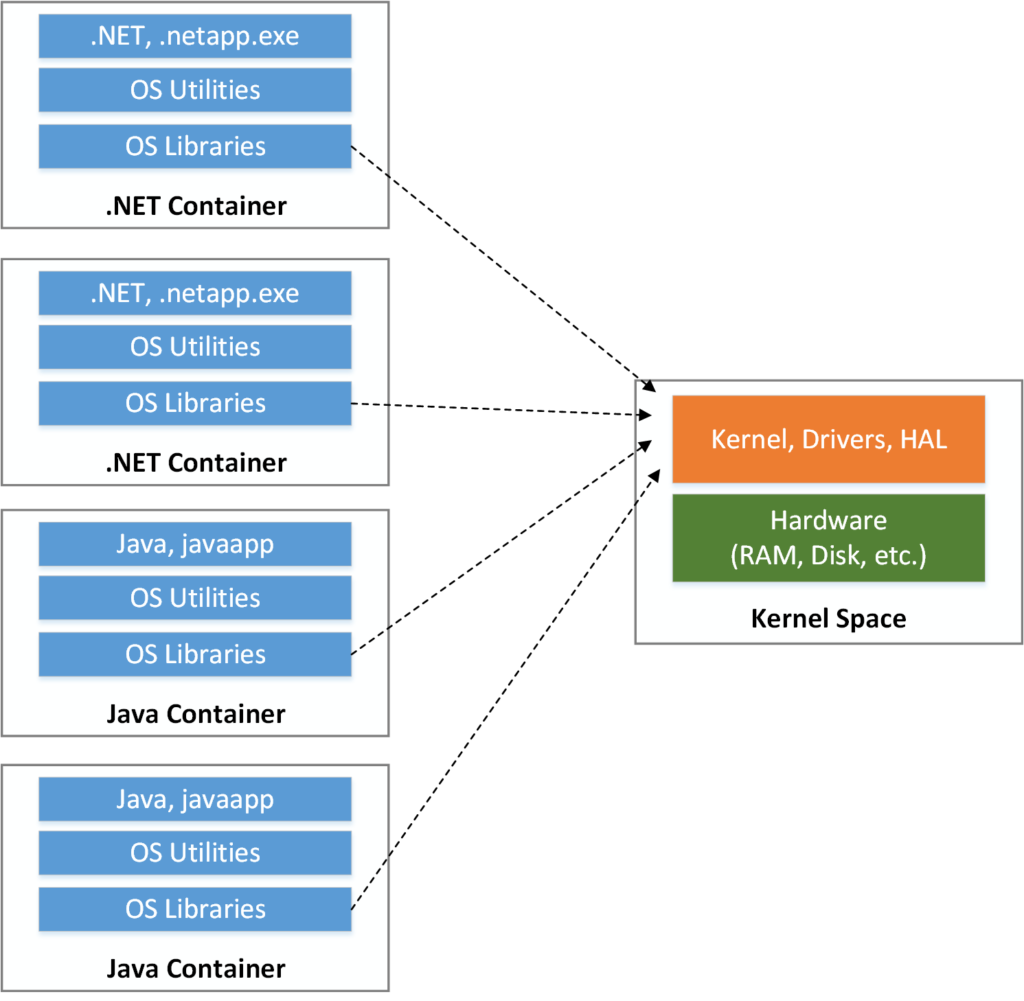

To visualize this, first start by considering the following:

This diagram depicts the interaction between the various layers of a software system, and the separation of the layers into two distinct groups:

This diagram depicts the interaction between the various layers of a software system, and the separation of the layers into two distinct groups:

- The “Kernel Space” includes a hardware layer (your disk, memory, etc.), and a “kernel” which allows the access to the hardware. The kernel is included in an operating system and is the bridge between applications and hardware.

- The “User Space” which includes additional components from the operating system, and the software application itself. The OS specific components are the core libraries provided by the operating system and the “utilities” – software applications that come packaged along with the operating system.

The interaction between these two layers occurs through system calls – which are privileged commands initiated by OS libraries in the User Space and received / acted on by the kernel in the Kernel Space.

Now, consider the fact that a container image is a package of all processes in the User Space needed for a specific application to function normally. There can be one or more processes included in the image, but when a container instance is created from the image, the processes running in the container instance will be unique and isolated from the processes on the host and from any other running container instance.

This architecture allows for interesting possibilities, including (not an exhaustive list):

- Multiple instances of an application running on the same host, sharing hardware but still maintaining process isolation.

- Portability across host machines without the traditional ceremony around installing and configuring software.

- Slimmed-down application packages that only include the essentials required for the software application to run (not full virtual machines, etc.).

I first saw Wes Higbee make the point that containers help “simplify the use of software” here, and then again in his PluralSight courses. These are very helpful and include more detailed discussion around containers and container architecture. However, the simple concept of “simplifying the use of software” helps me keep conceptualize the challenge containers are fundamentally addressing.