Technology is ever-evolving and constantly offers us methods to improve procedures, create new products that solve problems, and challenge the status quo by emphasizing – there is always a better way of doing things. The introduction of Application Performance Interfaces (APIs) transformed the application-building methods and how different applications communicate with each other. Today, enterprises can incorporate new services and updates into their existing infrastructure without taking a big hit at the cost front.

Azure API Management is a fantastic tool for managing APIs across all environments. But when it comes to efficiently and securely managing APIs hosted on-premises and across different clouds, one might have to look for better options. That’s wherein self-hosted gateways come into the picture. Self-hosted gateways allow companies to control how and where users access their APIs.

This video explains what API Management stands for and how we can address the problem of providing unified API Management everywhere using self-hosted gateways.

Azure API Management

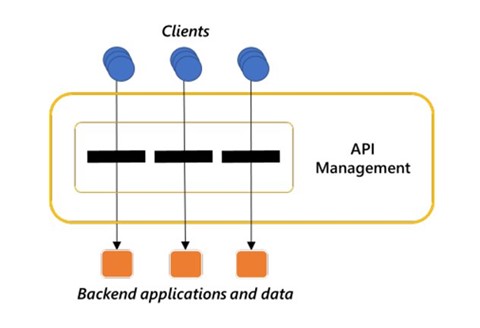

There are multiple factors that need to fall in place in order to create a healthy web application/API. Factors like security, bandwidth costs, latency optimization, performance Management, restricted client access to APIs, and more. We can either configure the setup manually, by custom-creating the functionalities or automate through API Management; the latter is more optimal. API Management provides a bridge between the clients and the backend applications and data.

With the help of API Management, you create a virtual wall that hides the backend applications and data from the client. So, whenever an organization wants to migrate, move, or implement changes to their backend applications, they can do it without any impact on the clients that use these applications. API Management establishes a single point of entry, the only front door you can say, for the backend applications and data, and intelligently routes the traffic from clients to backend applications. It works with authentication authorization and provides a guided control flow for clients. API Management solutions make our APIs more secure, efficient, and easy to use.

Self-hosted APIM Gateways

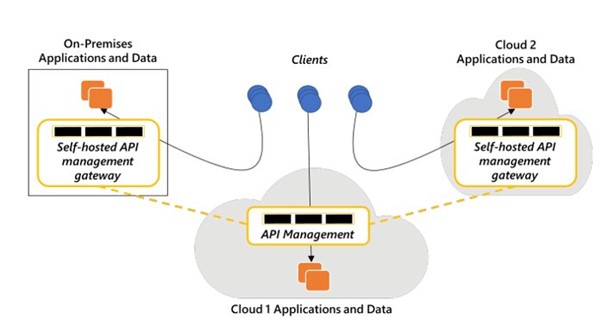

Introduced in 2019 by Microsoft, a self-hosted gateway enhances the capabilities of API Management, specifically for multi-cloud and hybrid scenarios. The self-hosted gateway enables the deployment of a containerized version of the default APIM gateway component in the same environments where you host your APIs. It empowers organizations to manage their APIs from a single centralized APIM instance irrespective of whether the APIs are hosted on-premises or on multiple clouds.

Why is a Self-hosted Gateway a Better Way of API Management?

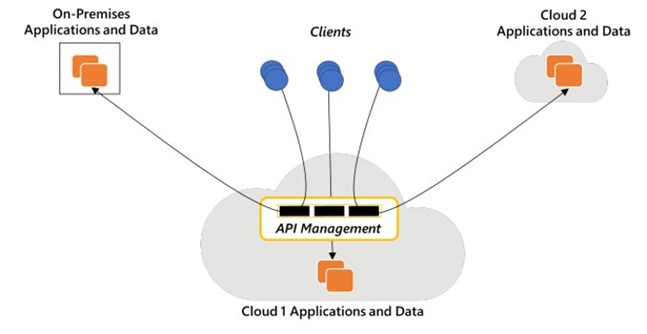

If an organization is aiming for a unified API Management solution, they need to look away from the traditional method. Let’s understand it with the help of a scenario. The below diagram represents a typical API Management infrastructure between on-premises and a couple of public clouds.

Here you can see that the API Management is deployed on cloud 1, which also has applications and data. Another set of applications and data are deployed on-premises and likewise on a second cloud referred to as Cloud 2. Now, whenever the client sends a request to the applications and data on Cloud 1, Cloud 2 or on-premises, it first interacts with the API Management of Cloud 1 and then gets routed to the destination.

While API Management can handle all these requests, it doesn’t necessarily mean that it is the best way to do it, or there isn’t a better way of doing it. You should surely consider using a self-hosted gateway, especially if you:

- want to enhance the security of your environment

- want to reduce costs and avoid additional charges

- want to reduce network latency

Enhanced Security and Compliance Adherence

What if the client is accessing on-premises applications and data within the same corporate environment, but none of the traffic is allowed to leave this environment? It can be a compliance requirement for some organizations not to allow information to flow outside the corporate network. By deploying a self-hosted gateway on-premises data center you can eliminate the need of passing requests through the API Management gateway deployed on a public cloud outside the environment. The traffic is not moving out, and thus critical problems like compliance and API security are solved.

Reduction in Overhead Costs

What if the client accesses application data through a different public cloud? There’s usually a cost attached to the cloud bandwidth, which is an extra cost and should be evaded if possible. By deploying a self-hosted gateway on a public cloud, the additional costs can be avoided. Consider the below scenario:

When a client wants to interact with Cloud 2, the request directly goes through without interacting with Cloud 1 due to the self-hosted API Management gateway deployed on Cloud 2. This means companies need not pay data outflow charges twice. Using self-hosted gateways you can discover, use and manage APIs across on-premises and multi-cloud environments.

Faster Processing, No Latency

Even if the client and the backend services are in the same environment, say on-prem, the request will need to go to the public cloud for API Management resulting in an unnecessary delay. Regardless of the fact that the delay is significantly less, unnecessary latency is never the ideal situation.

The gateway functionality of the Azure API Management is packed in a Linux-based Docker image. Since it is a docker image, it can be deployed as a container or Kubernetes cluster. The self-hosted API Management gateway requires only an outbound connection to the main cloud service with which it is federated. Our APIs are more secure because we have only an outbound connection to the Azure APIM and the cloud service.

If the self-hosted gateway doesn’t have any local storage, the cloud service provides all the information required by a self-hosted gateway, like the flow control policy for each API. The self-hosted gateway holds all the information in its memory. Imagine the self-hosted gateway fails – all the stored information is also lost. To avoid such a scenario, a self-hosted gateway can have local persistent storage that holds this configuration.

MANAGE APIs ACROSS ALL ENVIRONMENTS

Learn more about API Management and how AIS can help you address the problem of providing unified API Management everywhere using self-hosted gateways. Contact us today.